When Weak LLMs Speak with Confidence, Preference Alignment Gets Stronger

CW-PO is a general framework that re-weights training samples based on a weak LLM’s confidence scores, compatible with various preference optimization objectives. Using only a fraction of human preference annotations, CW-PO consistently outperforms baseline methods that rely on significantly larger annotation budgets.

Preference alignment is an essential step in adapting large language models (LLMs) to human values, but existing approaches typically depend on costly human annotations or large-scale API-based models. We explore whether a weak LLM can instead act as an effective annotator. We surprisingly find that selecting only a subset of a weak LLM’s highly confident samples leads to substantially better performance than using full human annotations. Building on this insight, we propose Confidence-Weighted Preference Optimization (CW-PO), a general framework that re-weights training samples by a weak LLM’s confidence. CW-PO offers three main advantages:

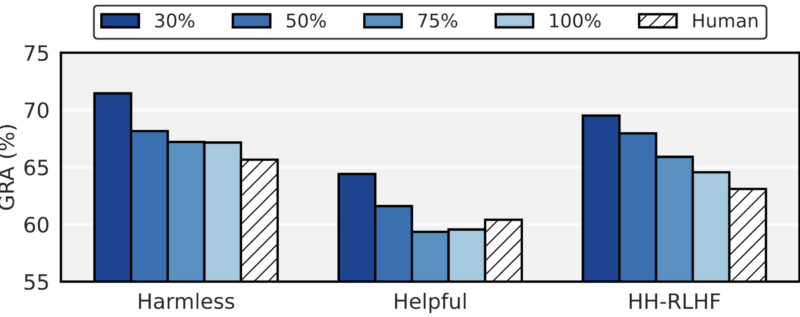

- High performance: A weak LLM can become an effective preference annotator using only a small amount of human-labeled data. Applied to DPO as CW-DPO, it outperforms a model trained with full human annotations using only 30% of the data, and remains effective with 20%.

- Low cost: Small weak annotators (under 0.5B parameters, even 125M) work well, making annotation much cheaper and more efficient than using humans or large API-based LLMs.

- Extensible: Once trained on a small human-labeled set, the weak annotator can be reused to label more preference data, making the approach practical and scalable.

Publication

When Weak LLMs Speak with Confidence, Preference Alignment Gets Stronger

Amirabbas Afzali*, Myeongho Jeon*, Maria Brbić.

@inproceedings{

afzali2026when,

title={When Weak {LLM}s Speak with Confidence, Preference Alignment Gets Stronger},

author={Amirabbas Afzali and Myeongho Jeon and Maria Brbic},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://openreview.net/forum?id=ROioaZ45Yz}

}

Key idea: Leveraging weak LLM’s confidence score

Our goal is to align a strong LLM using supervision from a lightweight, computationally efficient LLM. We first fine-tune the weak model on a subset of preference triplets with human annotations and then use its predictions to label the remaining data. Under this setting, we find that leveraging the confidence predicted by a weak LLM can substantially improve the alignment of a stronger model. This naturally raises the next question: How can we systematically incorporate this observation into the alignment paradigm?

Confidence-Weighted Preference Optimization (CW-PO)

(1) Constructing a preference annotator. For the weak model, we used its pretrained backbone, bypassed the last layer, and added a scalar output layer. We then optimized entire model using Bradley-Terry (BT).

(2) Generating preference labels. After fine-tuning, the weak LLM is applied to unlabeled pairs to determine preference labels.

(3) Aligning a strong large language model. Building on preference optimization (PO), we propose CW-PO, which introduces a confidence-based weight into the loss.

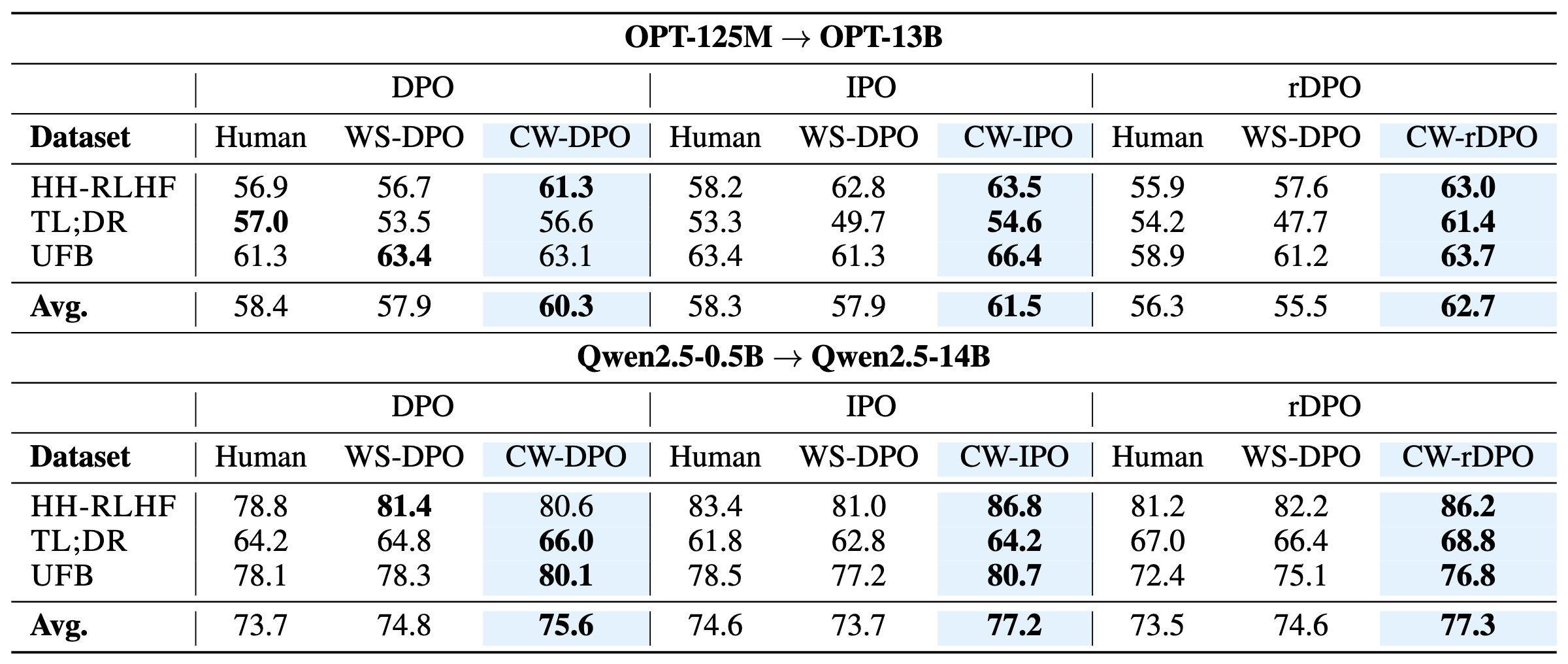

The proposed confidence weighting can be seamlessly integrated into any preference optimization (PO) objective. In our experiments, we show that it consistently improves performance when applied to direct preference optimization (DPO), identity PO (IPO), and robust DPO (rDPO). Please refer to the paper for the full mathematical formulation and details.

Evaluation setting and baselines

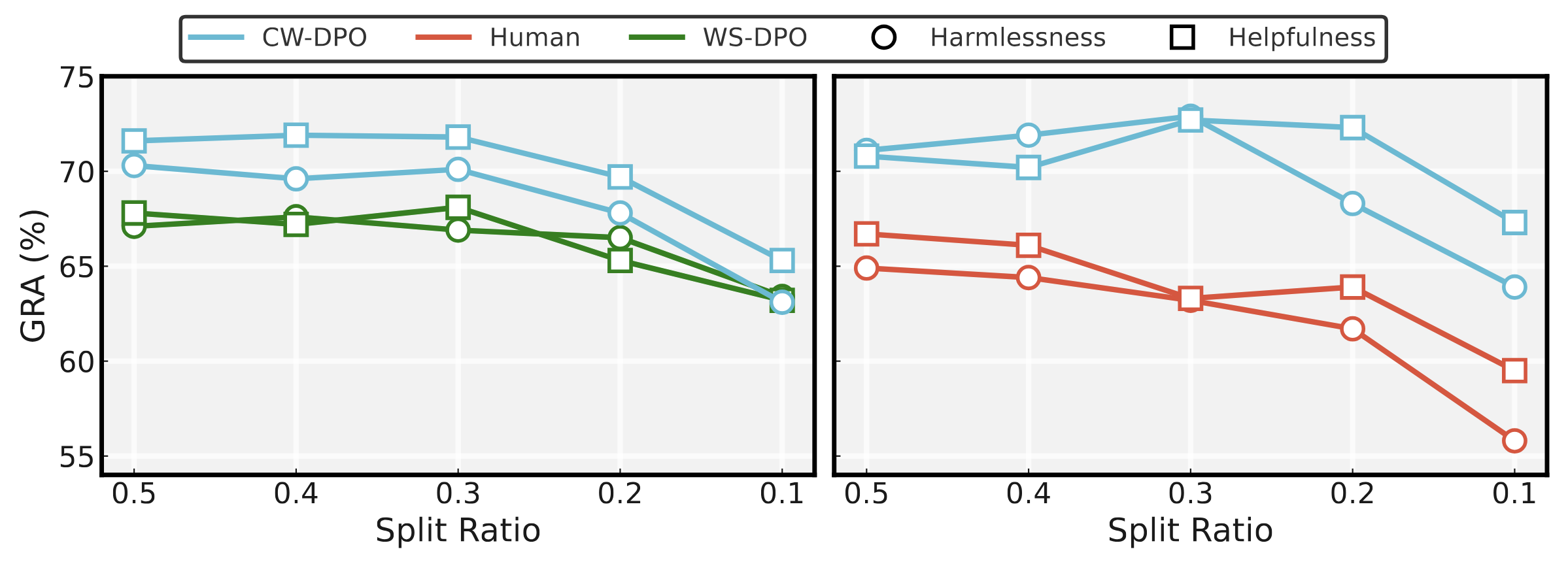

We evaluate model performance on preference optimization using HH-RLHF, TL;DR, and UFB. We compare our model against two baselines: (1) Human, which aligns a strong model using human-provided annotations, and (2) Weak LLM-Supervised PO (WS-PO), which aligns the strong model using annotations generated by a weak LLM.

Result

CW-PO improves alignment performance across different PO methods and model families. These results underscore two key insights: (i) CW-PO makes conventional preference alignment both more effective and cost-efficient. (ii) CW-PO serves as a plug-and-play enhancement for existing PO methods, improving their effectiveness without altering the underlying algorithm.

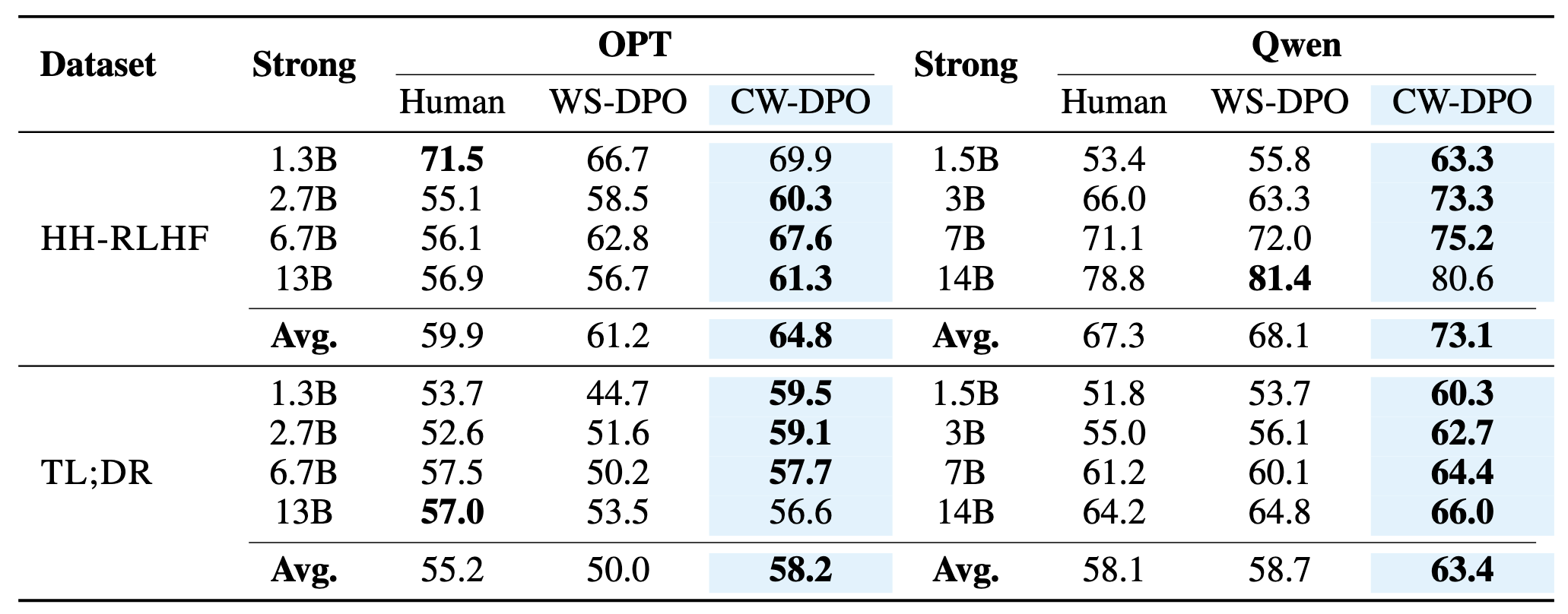

Different student models. We examine whether a weak model can effectively align a range of stronger policy models within the CW-PO framework. We vary the strong models across experiments and find that smaller and mid-sized models benefit more from CW-PO, whereas gains diminish as the strong model size increases.

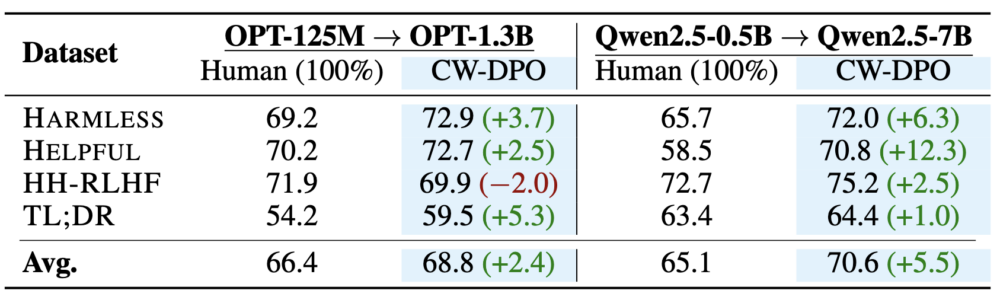

Comparison to using full human annotations. We further incorporate the dataset used to train the weak model into the strong model’s training, alongside the alignment dataset. Even under this setting, CW-DPO still outperforms the model trained with 100% human annotations.

Different split ratios for training the weak and strong models. We conduct two experiments to examine the effect of weak-model supervision: one where the strong model’s training data is fixed while varying the weak model’s data, and another where the total dataset is fixed and the labeled–unlabeled split changes. Across both settings, our method consistently outperforms direct DPO. Notably, even with only 20% of the annotations, it surpasses DPO trained on the fully human-annotated dataset.

Code

A PyTorch implementation of CW-PO will be released soon.

Contributors

The following people contributed to this work: